Traceable intelligence: Why 'black box' AI has no place in your investment strategy?

As AI tools become a standard part of the researcher’s toolkit, a dangerous trend is emerging: the "Black Box" summary. You ask an AI a question about a company’s debt, it gives you a confident answer, and you’re expected to take its word for it.

But for a professional investor, "Take my word for it" isn't a due diligence strategy. It's a liability.

The hallucination headache

We’ve all heard the horror stories of AI "hallucinating"—confidently stating facts that simply don't exist. In creative writing, that’s a quirk. In investment research, that’s a catastrophe. If you make a move based on a "hallucinated" margin or a fake risk factor, the market won't care that the AI was confident; it will only care that you were wrong.

This is why "Black Box" AI has no place in your strategy. You don't need an AI that gives you answers; you need an AI that gives you evidence.

Enter traceable intelligence

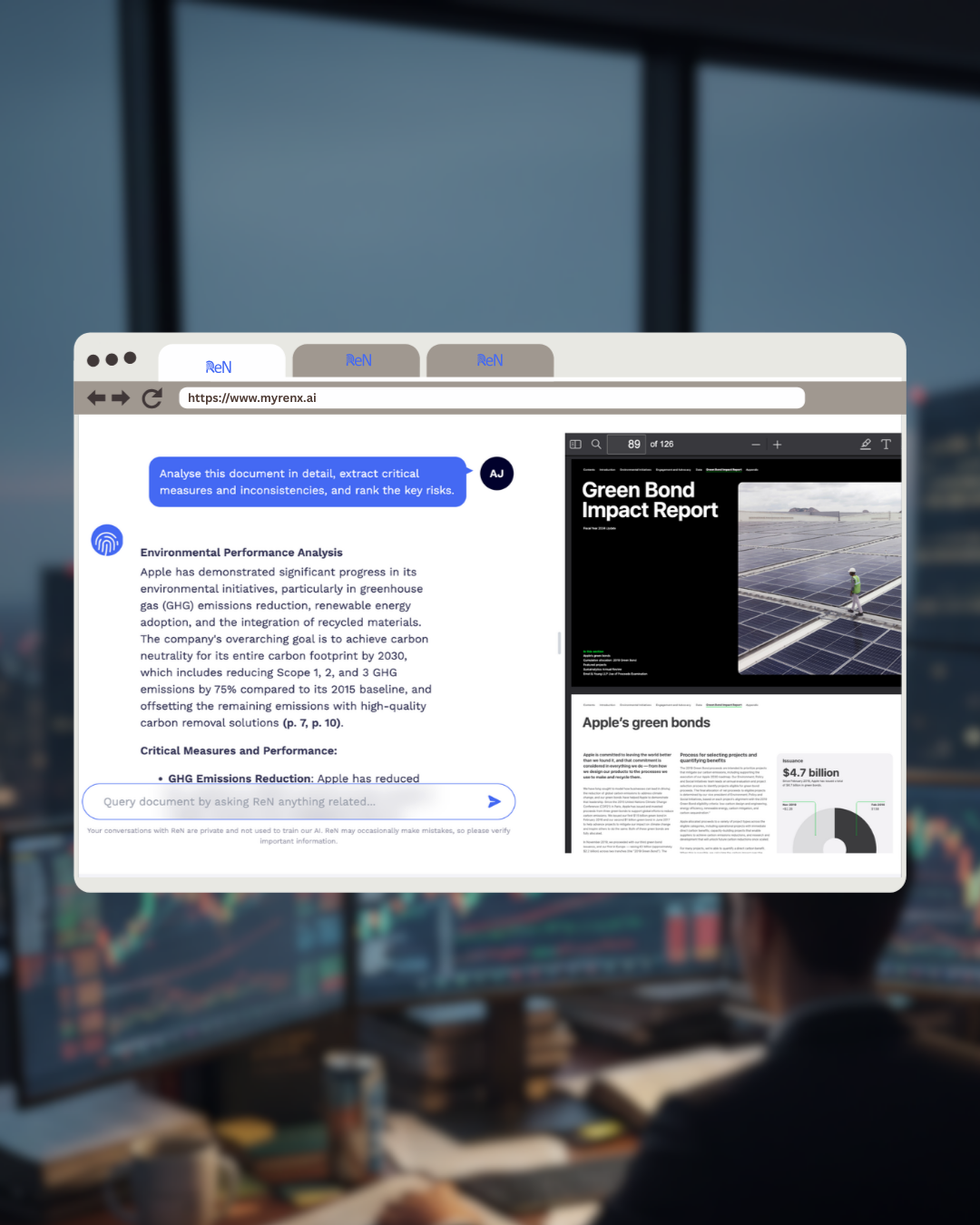

At ReN, we built our AI-native core with a "Show Your Work" philosophy. We call it Traceable Intelligence.

When ReN flags a shift in executive tone or identifies a new risk in a 10-K, it doesn't just tell you it exists. It provides a direct, clickable citation to the original source—the exact paragraph, the specific filing, and the timestamped earnings call.

Why traceability changes the game:

- Verification over Trust: You never have to wonder if the AI made it up. One click takes you to the raw data.

- Audit-Ready Research: When you present your thesis to a partner or a client, you aren't citing "an AI." You’re citing the SEC filings themselves, surfaced by AI.

- Context is King: AI is a powerful filter, but your human intuition is the final judge. By linking directly to the source, ReN keeps you in the driver’s seat.

Don’t just automate — validate

The goal of using AI shouldn't be to switch off your brain; it should be to free your brain from the manual search so you can focus on the analysis.

If your current AI tool doesn't show you its sources, it’s not a research tool—it’s a risk. Switch to the platform that treats data with the same level of scrutiny that you do.